That means there are methods for âhostsâ to give access to part of a single CPU or disk to a âguestâ operating system that thinks itâs all the hardware that exists. If that wasnât complex enough, especially in cloud environments, we often âvirtualizeâ the hardware further so it can be shared across many individual systems in an isolated way. Instead, these resources are managed for us by an operating system. Furthermore, more than one process is using these resources, so our program does not use them directly. This might sound simple, but in modern computers, these resources interact with each other in a complex, nontrivial way.

For example, if we use less CPU time (CPU âresourceâ) or fewer resources with slower access time (e.g., disk), we usually reduce the latency of our software. If the same functionality uses fewer resources, our efficiency increases and the requirements and net cost of running such a program decrease.

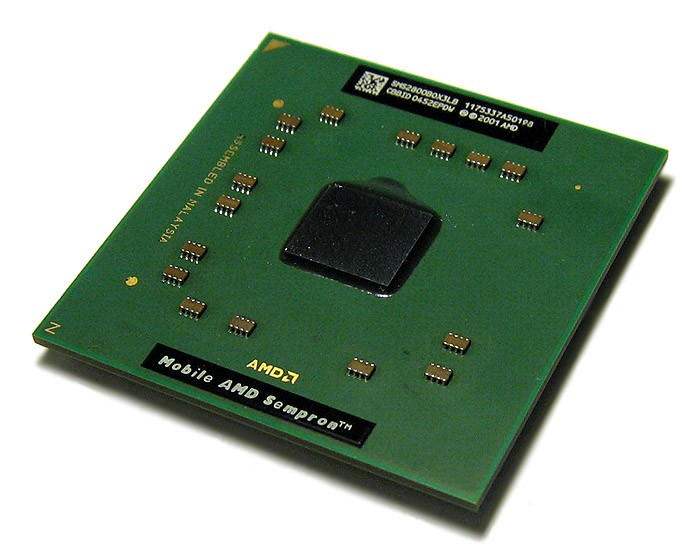

Fowler, Production-Ready Microservices (OâReilly, 2016)Īs you learned in âBehind Performanceâ, software efficiency depends on how our program uses the hardware resources. CPU, memory, data storage, and the network are similar to resources in the natural world: they are finite, they are physical objects in the real world, and they must be distributed and shared between various key players in the ecosystem. One of the most useful abstractions we can make is to treat properties of our hardware and infrastructure systems as resources.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed